On bug bounty effectiveness

Smart contract changes do not get enough scrutiny from bounty hunters — here’s how you can change that.

At Aurora, we have an impressive track record of running bug bounties that includes rewarding a whitehat with the second largest bounty in the history of crypto (so far!). One unique aspect of our bounty program is that we have a pretty large scope so many problems become visible at our scale.

The burden of on-chain change tracking is a main contributor to why bug bounty effectiveness declines over time. Below I explain why that is and my thoughts on how we can solve this.

False sense of security

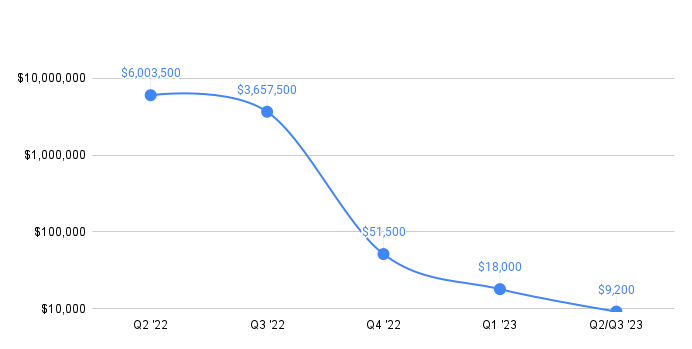

The first months after launching bounties are the most impactful (and stressful!) We've paid most of the rewards within 3 months after including new contracts in scope of our bug bounty programs.

The initial smart contract launch often comes with a press release so bounty hunters rush to find low-hanging fruit missed by auditors. After some time the likelihood that "many eyes" have missed something declines, the whitehats switch to easier targets and, naturally, the quantity of bug reports decreases.

Unfortunately, there is no proportional influx of bug reports when major new features are released.

The bounty scope does not explicitly change and whitehats need to continuously keep track of significant changes in the smart contracts to assess the likelihood of new bugs being introduced – a much harder task to do.

In search of a solution

There must be no ambiguity when new risky features get shipped to the contracts that are already covered by the bounty program so that bug hunters can focus on what they do best – finding bugs, not keeping up with release schedules.

To do that we must reduce information asymmetry between the protocol developers and the whitehats as much as possible and introduce new incentives to bring bug bounty effectiveness back to the initial level:

- Announce significant changes for the contracts that are already in-scope

- Allow whitehats to claim rewards before these features hit the mainnet

- Provide an additional incentive for whitehats to audit such changes in a timely manner

Ideally, bug bounty platforms could have a feed of upcoming changes to the contracts that are already in scope, describing what has changed and incentivising researchers to audit them before a certain deadline. Unfortunately, most of the platforms do not provide these capabilities yet.

When it comes to Aurora, we are working with our partners on integrating the practice of audit contests directly into the bug bounty programs to address exactly this problem. The main difference from continuously running bounty programs is that such contests will be relatively short to provide time-sensitive feedback and will cover bugs that are normally out-of-scope e.g. non-production code that is behind feature flags, best practices and performance issues that could hint at security problems.

In conclusion, I firmly believe that alleviating the burden of change tracking from whitehats will significantly enhance the effectiveness of bug bounty programs, contributing to a more secure ecosystem. Stay tuned for insights and lessons learned as we roll out upcoming contests!